Sensor Fusion for Self-Driving Cars

There are essentially three main system functions of self-driving cars: (1) car sensor-related system functions, (2) car processing related functions that we tend to consider the AI of the self-driving car, and (3) car control related functions that operate the accelerator, the brakes, the steering wheel, etc.

I am going to discuss today mainly the sensors and an important aspect of sensory data usage that is called sensor fusion. That being said, it is crucial to realize that all three of these main systems must work in coordination with each other. If you have the best sensors, but the AI and the control systems are wimpy then you won’t have a good self-driving car. If you have lousy sensors and yet have strong AI and controls capabilities, you will once again have likely problems because without good sensors the car won’t know what exists in the outside world as it drives and could ram into things.

As the Executive Director of the Cybernetic Self-Driving Car Institute, I am particularly interested in sensor fusion, and so I thought it might be handy to offer some insights on that particular topic. But, as noted above, keep in mind that the other systems and their functions are equally important to having a viable self-driving car.

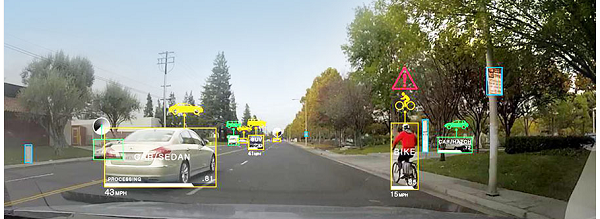

A self-driving car needs to have sensors that detect the world, and there needs to be subsystems focused on dealing with the sensors and sensory data being collected. These can be sensors such as cameras that collect visual images, radar that makes use of radio waves to detect objects, LIDAR (see my column about LIDAR) that makes use of laser light waves, ultrasonic sensors that use sound waves, and so on. There are passive sensors, such as the camera that merely accepts light into it and therefore receives images, and there are active sensors such as radar that sends out an electromagnetic radio wave and then receives back the bounce to then figure out whether an object was detected.

The sensory data needs to be put together in some fashion, referred to as sensor fusion, in order to make sense of the sensory data. Usually, the overarching AI processing system is maintaining a virtual model of the world within which the car is driving, and the model is updated by new data arriving from the sensors. As a result of the sensor fusion, the AI then needs to decide what actions should be undertaken, and then emits appropriate commands to the controls of the car to carry out those actions.

Read more : https://aitrends.com/ai-insider/sensor-fusion-self-driving-cars/

/http%3A%2F%2Fventurebeat.com%2Fwp-content%2Fuploads%2F2019%2F09%2Fwaymo-ipace-e1572290208222.jpg%3Fresize%3D1200%2C600%26strip%3Dall)

/http%3A%2F%2Fpbs.twimg.com%2Fmedia%2FEjKBVUUXYAE5MXW%3Fformat%3Djpg%26name%3Dsmall)

/http%3A%2F%2Fpbs.twimg.com%2Fcard_img%2F1303144707619532801%2F3-iTleij%3Fformat%3Djpg%26name%3Dsmall)

/http%3A%2F%2Fwww.autonomousvehicleinternational.com%2Fwp-content%2Fuploads%2F2020%2F08%2FFord-Michigan-Science-Center-1.jpg)